The 4P Method: how to quickly find new results

Exploratory framework and examples

As a person deeply passionate about investigations, I regularly see search challenges in both my personal life and that of others. These challenges range from straightforward questions such as “How do I find a good restaurant for dinner tonight?” to more complex ones such as, “Where to download the firmware of the given unknown microcontroller board?“.

Every time I resolve such challenges (or even when days of searching don’t yield satisfactory results), my mindset as an engineer pushes me to shift towards an automated solution — involving tools or, for broader tasks, the development of frameworks and systematic approaches.

Of course, we have many books, articles, guides, and courses covering some commonplace cases/scenarios (Cybersecurity, SOCMINT, GEOINT, etc.) or areas of human activity (e.g. Maritime OSINT). But we find ourselves at the start of a journey when it comes to relatively uncommon tasks, rarely dealt with by community contributors, influencers, and trainers. This means we must extensively search online and immerse ourselves in the new field to understand its complexities. Alternatively, consulting subject experts is a viable option, especially when time constraints limit our ability to conduct an in-depth exploration.

Consider the following task: “Finding DNA by protein sequences”. Ok, this might be a tough nut to crack, but what about… “How to find dumpling dough in Bruges?”.

Facing such challenges (did I say already that I see search tasks all around me?), I usually create some approaches and tools that allow me to achieve results quickly. Some of them, such as the SOWEL framework (for SOCMINT tasks) are published and developed with the community. In this article, I’ll focus on the 4P method.

The 4P Method

TL;DR: 4 steps to quickly identify sources of information in the uncharted field.

1. Purpose — Define the area of knowledge (domain) you wish to research. Ensure that it is broad enough to encompass all elements which are relevant to the topic at hand.

2. Principles — Next, identify crucial terms (nouns) based on the corresponding area of knowledge and your search question. They are usually subjects and objects of some actions.

3. Practices — Outline typical processes, procedures, practices and practical habits, employing verbs, to connect the above-mentioned terms.

4. Pathways — Lastly, analyse the information trajectory: its flow, origins and destinations during the previously identified practices.

By the end of this process, you will have a list of well-founded hypotheses regarding information sources and searching techniques within an area of knowledge, along with a fundamental grasp of the pertinent processes.

You might think, "Alright, but I would have approached it similarly. There's nothing groundbreaking here! I will engage in brief research cycles to deepen my understanding of the domain and to address my specific queries."

You are right, but lacking a structured approach often overlooks certain aspects and neglects hypotheses, especially when dealing with unfamiliar subjects.

Adopting a structured methodology is beneficial as it ensures comprehensive coverage of all elements and facilitates the potential automation of the process. This structure also opens up the possibility of delegating and distributing certain tasks and parts of research work, rather than managing everything individually.

A book search

But let’s see an example to catch the idea.

A few years ago, I was searching for a hard copy of a book that had been published in a limited edition. Using the 4P method, I gathered the following information:

Purpose: book market

Principles: book, title, author, publishing house, bookstore, ISBN, ISSN, library, book owner, book content, electronic catalogue, antiquarian books.

Practices:

Publishing houses print books and assign them ISBN/ISSN numbers, then send them to stores;

Stores put books into databases and publish them on websites;

People or libraries buy books;

People exchange books (bookcrossing) and sell them on classified advertising websites;

People scan and publish electronic versions of books;

People make reviews and discuss books;

Libraries publish catalogues;

Companies make office libraries (B2B) for employees with printed/electronic books.

Pathways:

The publishing house prepares a book, writes a description, and knows where the edition is sent;

Websites and libraries offer the possibility to search in electronic catalogues;

People post photos and descriptions of books on social networks and classified advertising websites;

Online catalogues are created for searching electronic versions.

So, after this process was applied, I understood that I have to check ads on specific websites and social media, search catalogues of certain books shops and contact the publishing house in case of failed previous steps. But also, I was lucky to find a non-indexed platform selling rare books, and found the target there!

Additionally, I discovered some intriguing resources, such as Book Shazam (hopelessly outdated by now) and the oldest (in a specific region) website for rare books (where I later could purchase some fascinating items related to open-source intelligence!)

The attentive reader might realise that the source where I ultimately located the book isn't mentioned in the 4P example above. Indeed, my approach didn’t produce a straightforward, beacon-lit path to the "Download book here!" button. Instead, by navigating through the 4P process, I understood where and how information about books comes to the surface and, specifically, where people sell their books, and that was enough of a victory for me!

But if you're a little bored, try to answer the question using this technique: “What is the height of the SEGU1131030 shipping container?”

Structured data

After recognising the potential of this method, I became fascinated with the concept of leveraging semantic web features to simplify the creation of 4P models. Also, structured data is abundant in the form of thesauruses, rubricators, glossaries, dictionaries, and ontologies, all incredibly useful for this purpose!

I dedicated several weeks to developing a proof of concept tool to collect information surrounding key concepts from Wikidata, which includes over 100 million data items that are thoroughly described, interlinked, and verified.

For instance, collecting information about books from the previous example using Wikidata can yield a comprehensive understanding of all related concepts with their descriptions and provide links to websites where you can locate a book by its ISBN. This method enhances the efficiency and breadth of your search, connecting you directly to valuable resources!

But after days of graph searches with GRAPHQL and a training course dedicated to graph analysis and knowledge bases (I'm stubborn persistent enough), I came to understand that when mining data from it for a specific domain field, we encounter the challenge of defining the domain, and its boundaries and aligning these with your search objective, which is dramatically challenging to solve without human analysis… Additionally, Wikidata's coverage significantly differs across various topics, which makes such automation much more difficult.

Did I mention that this was before the ChatGPT boom?

Here comes LLMs

Have you already thought, 'What if I ask ChatGPT to do it?' Well, it works!

While the result might not be groundbreaking, it will offer a laconic overview of the requested knowledge area. And you can ask the LLM for examples of sources, and it will provide them!

Imagine that you are an OSINT specialist searching for information on the Internet. You use a method (listed below) that forces you to think step by step and find non-obvious sources of information. You seek information to answer a question "How to buy a rare book in Amsterdam?".

The method:

You are describing the topic "rare books market" following the next steps.

1. You list the most common nouns (concepts) related to the topic.

2. You list all the processes associated with the words from first step and the topic in "subject verb object" format (for example, "a publisher publishes a book").

3. You list information systems where information about the concepts of the topic appears.

4. You list the URLs of information systems where information about the concepts of our topic appears.

The answers to each item must be numbered. All lists should be sorted in descending order of helpfulness, and each list item should be on a new line (essential to make the resulting text readable!). In each answer, there must be at least 10 results. Clearly specify if some URLs are hypothetical.And the answer (current as of January 2024) is here.

Not bad, huh?

You can ask LLMs for more concepts, processes, information systems examples… Ask anything additional to dig into this topic. You're no longer staring into a vast unknown; you have clear paths and crossroads leading everywhere.

But tools like ChatGPT are useful for OSINT in general, right? There's been much talk about it (for instance). Still, I want to give some thoughts of mine and share my experience of ChatGPT OSINT bot creation (thanks Gleb Trepalin for the contribution!)

OSINT GPT helpers

Examples:

IntellGPT from Gleb Trepalin (post) — utilise Bing search and data science approaches to allow you to quickly find what you want.

OSINT Investigator from Aida Kokanović (post) — allows you to build great hypotheses using your context and verify them, explaining sources and tools you may use.

4P Method Search Assistant from Soxoj — yep, I have already made such a helper for the 4P method!

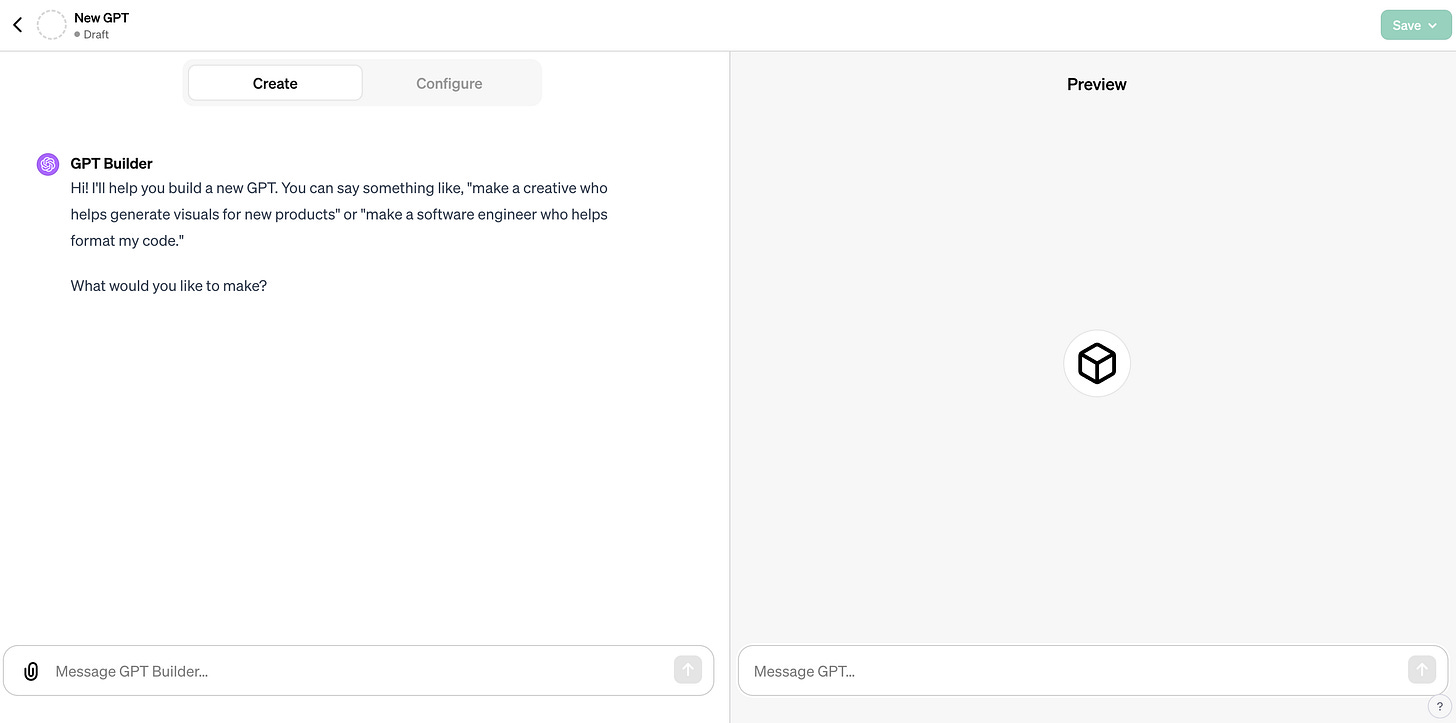

Initially, you can create an agent in dialogue mode or manually configure some things.

GPT agents aren't solely based on ChatGPT architecture. They run on GPT-4, which amalgamates all available features. For each agent, you activate the following features manually:

- Web Browsing

- Dalle Image Generation

- Code Interpreter (disabled by default).

An agent is fundamentally built from three elements: a general Description, working Instructions, and Conversation starters. These components define the agent's behaviour, with Instructions being the most crucial.

A separate Knowledge section allows you to upload your files to the agent. It could just be a set of leaked documents, your internal knowledge database… I'm even thinking of uploading SOWEL there when it is ready!

For the Instructions, OpenAI can generate text using dialogue mode, asking you for specific things: style of communication, type of answers (step-by-step, to-the-point), and remembering previous messages.

Conversation starters are typical questions for your bot. For IntellGPT they are:

- How do I analyse social media for intelligence gathering?

- Explain the Intelligence Cycle and its importance.

- What are key techniques in OSINT?

- How can I improve data collection in intelligence analysis?

After configuring, you can test the agent right in the sandbox on the same page.

It's important to note that creating GPT agents is free, and OpenAI’s GPT Store promises to be a true gold mine. Why not make your agent? ;)

IMO, GPT agents for OSINT purposes are just beginning to unfold their potential. Their strengths lie in the vast knowledge of models, the ability to guide users within a predefined framework, and the simple option to ask questions or engage in conversation. This is a significant advantage for both beginners and those tackling unusual tasks (as discussed in this article), and perhaps even for professionals.

We all make mistakes, feel down, or sometimes need conversations to process our thoughts. It's terrific that technology can aid us in improving and contributing to a safer world through OSINT.

Thanks a lot for proofreading Dieter Stroobants, Ramingo, OSINT_Tactical, and @godisabsurd.